This is the multi-page printable view of this section. Click here to print.

Documentation

- 1: Announcements

- 2: Overview

- 2.1: Architecture

- 3: Components

- 3.1: Core Components

- 3.1.1: Crier

- 3.1.2: Deck

- 3.1.2.1: How to setup GitHub Oauth

- 3.1.2.2: CSRF attacks

- 3.1.3: Hook

- 3.1.4: Horologium

- 3.1.5: Prow-Controller-Manager

- 3.1.6: Sinker

- 3.1.7: Tide

- 3.1.7.1: Configuring Tide

- 3.1.7.2: Maintainer's Guide to Tide

- 3.1.7.3: PR Author's Guide to Tide

- 3.2: Optional Components

- 3.2.1: Branchprotector

- 3.2.2: Exporter

- 3.2.3: gcsupload

- 3.2.4: Gerrit

- 3.2.5: HMAC

- 3.2.6: jenkins-operator

- 3.2.7: status-reconciler

- 3.2.8: tot

- 3.2.8.1: fallbackcheck

- 3.2.9: Gangway (Prow API)

- 3.2.10: Sub

- 3.3: CLI Tools

- 3.3.1: checkconfig

- 3.3.2: config-bootstrapper

- 3.3.3: generic-autobumper

- 3.3.4: invitations-accepter

- 3.3.5: mkpj

- 3.3.6: mkpod

- 3.3.7: Peribolos

- 3.3.8: Phony

- 3.4: Pod Utilities

- 3.4.1: clonerefs

- 3.4.2: entrypoint

- 3.4.3: initupload

- 3.4.4: sidecar

- 3.5: Plugins

- 3.5.1: approve

- 3.5.1.1: Reviewers and Approvers

- 3.5.2: branchcleaner

- 3.5.3: lgtm

- 3.5.4: updateconfig

- 3.6: External Plugins

- 3.6.1: cherrypicker

- 3.7: Deprecated Components

- 3.7.1: cm2kc (clustermap to kubeconfig)

- 3.7.2: Phaino

- 3.7.3: Plank

- 3.7.4: tackle

- 3.8: Undocumented Components

- 4: GKE Build Clusters

- 5: Contribution Guidelines

- 6: Metrics

- 7: Building, Testing, and Updating Prow

- 8: Local Development Environment

- 9: Local Development with a Remote KinD Cluster

- 10: Local Development with Tilt

- 11: Deploying Prow

- 12: Developing and Contributing to Prow

- 13: Getting more out of Prow

- 14: GitHub API Library

- 15: ghProxy

- 15.1: ghCache

- 15.2: Additional throttling algorithm

- 16: Inrepoconfig

- 17: Life of a Prow Job

- 18: Prow Configuration

- 19: Prow Secrets Management

- 20: Gerrit

- 21: ProwJobs

- 22: Setting up Private Deck

- 23: Spyglass

- 23.1: Spyglass Architecture

- 23.2: Build a Spyglass Lens

- 23.3: JUnit lens

- 23.4: REST API coverage lens

- 24: Using Prow at Scale

- 25: Understanding Started.json and Finished.json

- 26: Testing Prow

- 26.1: Run Prow integration tests

- 26.1.1: Fake Git Server (FGS)

- 27: Legacy Snapshot

1 - Announcements

New features

New features added to each component:

-

April 20, 2024 The

ghcache_cache_parititionsPrometheus metric has been deprecated in favor ofghcache_cache_partitions. Besides spelling both metrics are identical. -

April 20, 2024 The

validate-supplemental-prow-config-hirarchycheck incheckconfighas been renamed tovalidate-supplemental-prow-config-hierarchy; the old name is now deprecated. -

October 20, 2023 The update to Inrepoconfig handling will break users if they have symlinks inside

.prow/that point to targets elsewhere in the codebase. See this comment for details. -

January 20, 2023 Remove k8s-ci-robot at-mention autoresponse for instances that does not warrant additional explanation in the details section.

-

August 4, 2022 override plugin will now override checkruns set by GitHub Actions and other CI systems on a PR.

-

June 8, 2022

deck.rerun_auth_configscan optionally be replaced withdeck.default_rerun_auth_configswhich supports a new format that is a slice of filters with associated rerun auth configs rather than a map. Currently entries can filter by repo and/or cluster. The old field is still supported and will not be deprecated. -

April 6, 2022 Highlight and pin interesting lines. To do this, shift-click on log lines in the buildlog lens. The URL fragment causes the same lines to be highlighted next page load. Additionally, when viewing a GCS log pressing the pin button saves the highlight. The saved highlight automatically displays next page load.

-

January 24, 2022 It is possible now to define GitHub Apps bots as trusted users to allow automatic tests trigger without relying on

/ok-to-testfrom organization member. Trigger and DCO plugins configuration now support additional fieldtrusted_apps, which contains list of GitHub Apps bot usernames without[bot]suffix. -

January 11, 2022 Trigger plugin can now trigger failed github jobs. The feature needs to be enabled in the

triggerssection of theplugin.yamlconfig and can be specified per trigger as follows:triggers: - repos: - org/repo - org2 trigger_github_workflows: true -

August 24, 2021 Postsubmit Prow jobs now support the

always_runfield. This field interacts with therun_if_changedandskip_if_only_changedfields as follows:- (NEW) If the field is explicitly set to

always_run: false, then the Postsubmit will not run automatically. The intention is to allow other triggers outside of a GitHub change, such as a Pub/Sub event, to trigger the job. See this issue for the motivation. However ifrun_if_changedorskip_if_only_changedis also set, then those triggers are determined first; if for whatever reason they cannot be determined, then the job will not run automatically (and wait for another trigger such as a Pub/Sub event as mentioned above). - If the field is explicitly set to

always_run: true, then the Postsubmit job will always run. Also trying to setrun_if_changedorskip_if_only_changedin the same Postsubmit job will result in a config error. This mutual exclusivity matches the configuration behavior of Presubmit jobs, which also disallow combiningalways_run: truetogether withrun_if_changedorskip_if_only_changed. - If

always_runis not set (missing from the job config):- If both

run_if_changedandskip_if_only_changedare not set: same as old behavior (Postsubmit job will run automatically upon a GitHub change). - If one of

run_if_changedorskip_if_only_changedis set: same as old behavior (running will depend onrun_if_changedorskip_if_only_changed).

- If both

- (NEW) If the field is explicitly set to

-

May 14th, 2021: All components that interact with GitHub newly allow client-side throttling customization via

--github-hourly-tokensand--github-allowed-burstparameters. A notable exception to this is Tide which has custom throttling logic and does not expose these two new options. Other existing custom options in branchprotector, peribolos, status-reconciler and needs-rebase (--tokens/--hourly-tokensetc.) are deprecated and will be removed in August 2021. -

April 12th, 2021 End of grace period for storage bucket validation, additional buckets have to be allowed by adding them to the

deck.additional_allowed_bucketslist. -

March 9th, 2021 Tide batchtesting will now continue to test a given batch even when more PRs became eligible while a test failed. You can disable this by setting

tide.prioritize_existing_batches.<org or org/repo>: falsein your Prow config. -

March 3, 2021

plank.default_decoration_configscan optionally be replaced withplank.default_decoration_config_entrieswhich supports a new format that is a slice of filters with associated decoration configs rather than a map. Currently entries can filter by repo and/or cluster. The old field is still supported and will not be deprecated. -

February 23, 2021 New format introduced in

plugins.yaml. Repos can be excluded from plugin definition at org level usingexcluded_reposnotation. The previous format will be deprecated in July 2021, see https://github.com/kubernetes/test-infra/issues/20631. -

November 2, 2020 Tide is now able to respect checkruns.

-

September 15, 2020 Added validation to Deck that will restrict artifact requests based on storage buckets. Opt-out by setting

deck.skip_storage_path_validationin your Prow config. Buckets specified in job configs (<job>.gcs_configuration.bucket) and plank configs (plank.default_decoration_configs[*].gcs_configuration.bucket) are automatically allowed access. Additional buckets can be allowed by adding them to thedeck.additional_allowed_bucketslist. (This feature will be enabled by default ~Jan 2021. For now, you will begin to notice violation warnings in your logs.) -

August 31th, 2020 Added

gcs_browser_prefixesfield in spyglass configuration.gcs_browser_prefixwill be deprecated in February 2021. You can now specify different values for different repositories. The format should be in org, org/repo or ‘*’ which is the default value. -

July 13th, 2020 Configuring

job_url_prefix_configwithgcs/prefix is now deprecated. Please configure a job url prefix without thegcs/storage provider suffix. From now on the storage provider is appended automatically so multiple storage providers can be used for builds of the same repository. For now we still handle the old configuration format, this will be removed in September 2020. To be clear handling of URLs with/view/gcsin Deck is not deprecated. -

June 23rd, 2020 An hmac tool was added to automatically reconcile webhooks and hmac tokens for the orgs and repos integrated with your prow instance.

-

June 8th, 2020 A new informer-based Plank implementation was added. It can be used by deploying the new prow-controller-manager binary. We plan to gradually move all our controllers into that binary, see https://github.com/kubernetes/test-infra/issues/17024

-

May 31, 2020 ‘–gcs-no-auth’ in Deck is deprecated and not used anymore. We always fall back to an anonymous GCS client now, if all other options fail. This flag will be removed in July 2020.

-

May 25, 2020 Added

--blob-storage-workersand--kubernetes-blob-storage-workersflags to crier. The flags--gcs-workersand--kubernetes-gcs-workersare now deprecated and will be removed in August 2020. -

May 13, 2020 Added a

decorate_all_jobsoption to job configuration that allows to control whether jobs are decorated by default. Individual jobs can use thedecorateoption to override this setting. -

March 25, 2020 Added a

report_templatesoption to the Plank config that allows to specify different report templates for each organization or a specific repository. Thereport_templateoption is deprecated and it will be removed on September 2020 which is going to be replaced with the*value inreport_templates. -

January 03, 2020 Added a

pr_status_base_urlsoption to the Tide config that allows to specify different tide’s URL for each organization or a specific repository. Thepr_status_base_urlwill be deprecated on June 2020 and it will be replaced with the*value inpr_status_base_urls. -

November 05, 2019 The

config-updaterplugin supports update configs on build clusters by usingclusters. The fields namespace and additional_namespaces are deprecated. -

October 27, 2019 The

trusted_orgfunctionality in trigger is being deprecated in favour of being more explicit in the fact that org members or repo collaborators are the trusted users. This option will be removed completely in January 2020. -

October 07, 2019 Added a

default_decoration_configsoption to the Plank config that allows to specify different plank’s default configuration for each organization or a specific repository.default_decoration_configwill be deprecated in April 2020 and it will be replaced with the*value indefault_decoration_configs. -

August 29, 2019 Added a

batch_size_limitoption to the Tide config that allows the batch size limit to be specified globally, per org, or per repo. Values default to 0 indicating no size limit. A value of -1 disables batches. -

July 30, 2019

authorized_usersinrerun_auth_configfor deck will becomegithub_users. -

July 19, 2019 deck will soon remove its default value for

--cookie-secret-file. If you set--oauth-urlbut not--cookie-secret-file, add--cookie-secret-file=/etc/cookie-secretto your deck instance. The default value will be removed at the end of October 2019. -

July 2, 2019 prow defaults to report status for both presubmit and postsubmit jobs on GitHub now.

-

June 17, 2019 It is now possible to configure the channel for the Slack reporter directly on jobs via the

.reporter_config.slack.channelconfig option -

May 13, 2019 New

plankconfigpod_running_timeoutis added and defaulted to two days to allow plank abort pods stuck in running state. -

April 25, 2019

--job-configinperiboloshas never been used; it is deprecated and will be removed in July 2019. Remove the flag from any calls to the tool. -

April 24, 2019

file_weight_countin blunderbuss is being deprecated in favour of the more currentmax_request_countfunctionality. Please ensure your configuration is up to date before the end of May 2019. -

March 12, 2019 tide now records a history of its actions and exposes a filterable view of these actions at the

/tide-historydeck path. -

March 9, 2019 prow components now support reading gzipped config files

-

February 13, 2019 prow (both plank and crier) can set status on the commit for postsubmit jobs on github now! Type of jobs can be reported to github is gated by a config field like

github_reporter: job_types_to_report: - presubmit - postsubmitnow and default to report for presubmit only. The default will change in April to include postsubmit jobs as well You can also add

skip_report: trueto your post-submit jobs to skip reporting if you enable postsubmit reporting on. -

January 15, 2019

approvenow considers self-approval and github review state by default. Configure withrequire_self_approvalandignore_review_state. Temporarily revert to old defaults withuse_deprecated_2018_implicit_self_approve_default_migrate_before_july_2019anduse_deprecated_2018_review_acts_as_approve_default_migrate_before_july_2019. -

January 12, 2019

blunderblussplugin now provides a new command,/auto-cc, that triggers automatic review requests. -

January 7, 2019

implicit_self_approvewill becomerequire_self_approvalin the second half of this year. -

January 7, 2019

review_acts_as_approvewill becomeignore_review_statein the second half of this year. -

October 10, 2018

tidenow supports the-repo:foo/bartag in queries via theexcludedReposYAML field. -

October 3, 2018

welcomenow supports a configurable message on a per-org, or per-repo basis. Please note that this includes a config schema change that will break previous deployments of this plugin. -

August 22, 2018

spyglassis a pluggable viewing framework for artifacts produced by Prowjobs. See a demo here! -

July 13, 2018

blunderblussplugin will now supportrequired_reviewersin OWNERS file to specify a person or github team to be cc’d on every PR that touches the corresponding part of the code. -

June 25, 2018

updateconfigplugin will now support update/remove keys from a glob match. -

June 05, 2018

blunderbussplugin may now suggest approvers in addition to reviewers. Useexclude_approvers: trueto revert to previous behavior. -

April 10, 2018

claplugin now supports/check-clacommand to force rechecking of the CLA status. -

February 1, 2018

updateconfigwill now update any configmap on merge -

November 14, 2017

jenkins-operator:0.58exposes prometheus metrics. -

November 8, 2017

horologium:0.14prow periodic job now support cron triggers. See https://godoc.org/gopkg.in/robfig/cron.v2 for doc to the cron library we are using.

Breaking changes

Breaking changes to external APIs (labels, GitHub interactions, configuration or deployment) will be documented in this section. Prow is in a pre-release state and no claims of backwards compatibility are made for any external API. Note: versions specified in these announcements may not include bug fixes made in more recent versions so it is recommended that the most recent versions are used when updating deployments.

-

August 24th, 2022 Deck by default validating storage buckets, can still opt out by setting

deck.skip_storage_path_validation: truein your Prow config. Buckets specified in job configs (<job>.gcs_configuration.bucket) and plank configs (plank.default_decoration_configs[*].gcs_configuration.bucket) are automatically allowed access. Additional buckets can be allowed by adding them to thedeck.additional_allowed_bucketslist. -

May 27th, 2022 Crier flags

--gcs-workersand--kubernetes-gcs-workersare removed in favor of--blob-storage-workersand--kubernetes-blob-storage-workers. -

May 27th, 2022 The

owners_dir_blacklistfield in prow config is removed in favor ofowners_dir_denylist. -

February 22nd, 2022 Since prow version

v20220222-acb5731b85, the entrypoint container in a prow job will run as--copy-mode-only, instead of/bin/cp /entrypoint /tools/entrypoint. Entrypoint images before the mentioned version will not work with--copy-mode-only, and entrypoint image since the mentioned version will not work with/bin/cp /entrypoint /tools/entrypoint. In another word, prow versions newer than or equal tov20220222-acb5731b85will stop working with pod utilities versions older thanv20220222-acb5731b85. If your prow instance is bumped byprow/cmd/generic-autobumperthen you should not be affected. -

February 22nd, 2022 Since prow version

v20220222-acb5731b85, prow images pushed to gcr.io/k8s-prow will be built with ko, and the binaries will be placed under/ko-app/, for example /robots/commenter is pushed to gcr.io/k8s-prow/commenter, the commenter binary is located at/ko-app/commenterin the image, prow jobs that use this image will update tocommand: - /ko-app/commenterto make it work, alternatively, the command could also becommand: - commenteras/ko-appis added to$PATHenv var in the image. -

February 22nd, 2022 Since prow version

v20220222-acb5731b85, static files indeckimage will be stored under/var/run/ko/directory. -

October 27th, 2021 The checkconfig flag

--prow-yaml-repo-pathno longer defaults to/home/prow/go/src/github.com/<< prow-yaml-repo-name >>/.prow.yamlwhen--prow-yaml-repo-nameis set. The defaulting has instead been replaced with the assumption that the Prow YAML file/directory can be found in the current working directory if--prow-yaml-repo-pathis not specified. If you are running checkconfig from a decorated ProwJobs as is typical, then this is already the case. -

September 16th, 2021 The ProwJob CRD manifest has been extended to specify a schema. Unfortunately, this results in a huge manifest which in turn makes the standard

kubectl applyfail, as the last-applied annotation it generates exceeds the maximum annotation size. If you are using Kubernetes 1.18 or newer, you can add the--server-side=trueargument to work around this. If not, you can use a schemaless manifest -

September 15th, 2021

autobumpremoved, please usegeneric-autobumperinstead, see example config -

April 16th, 2021 Flagutil remove default value for

--github-token-path. -

April 15th, 2021 Sinker requires –dry-run=false (default is true) to function correctly in production.

-

April 14th, 2021 Deck remove default value for

--cookie-secret-file. -

April 12th, 2021 Horologium now uses a cached client, which requires it to have watch permissions for Prowjobs on top of the already-required list and create.

-

April 11th, 2021 The plank binary has been removed. Please use the Prow Controller Manager instead, which provides a more modern implementation of the same functionality.

-

April 1st, 2021 The

owners_dir_blacklistfield in prow config has been deprecated in favor ofowners_dir_denylist. The support ofowners_dir_blacklistwill be stopped in October 2021. -

April 1st, 2021 The

labels_blacklistfield in verify-owners plugin config is deprecated in favor oflabels_denylist. The support forlabels_blacklistshall be stopped in October 2021. -

January 24th, 2021 Planks Pod pending and Pod scheduling timeout defaults where changed from 24h each to the more reasonable 10 minutes/5 minutes, respectively.

-

January 1, 2021 Support for

whitelistandbranch_whitelistfields in Slack merge warning configuration is discontinued. You can useexempt_usersandexempt_branchesfields instead. -

November 24, 2020 The

requiresigplugin has been removed in favor of therequire-matching-labelplugin which provides equivalent functionality (example plugin config) -

November 14, 2020 The

whitelistandbranch_whitelistfields in Slack merge warning were deprecated on August 22, 2020 in favor of the newexempt_usersandexempt_branchesfields. The support for these fields shall be stopped in January 2021. -

November 11th, 2020 The prow-controller-manager and sinker now require RBAC to be set up to manage their leader lock in the

coordination.k8s.iogroup. See here -

November, 2020 The deprecated

namespaceandadditional_namespacesproperties have been removed from the config updater plugin for more details. -

November, 2020 The

blacklistflag in status reconciler has been deprecated in favor ofdenylist. The support ofblacklistwill be stopped in February 2021. -

October, 2020 The

plankbinary has been deprecated in favor of the more modern implementation in the prow-controller-manager that provides the same functionality. Check out its README or check out its deployment and rbac manifest. The plank binary will be removed in February, 2021. -

September 14th, 2020 Sinker now requires

LISTandWATCHpermissions for pods -

September 2, 2020 The already deprecated

namespaceandadditional_namespacessettings in the config updater will be removed in October, 2020 -

August 28, 2020

tidenow ignores archived repositories in queries. -

August 28, 2020 The

Clustersformat and associated--build-clusterflag has been removed. -

August 24, 2020 The deprecated reporting functionality has been removed from Plank, use crier with

--github-workers=1instead Use a.kube/configwith the--kubeconfigflag to specify credentials for external build clusters. -

August 22, 2020 The

whitelistandbranch_whitelistfields in Slack merge warning are deprecated in favor of the newexempt_usersandexempt_branchesfields. -

July 17, 2020 Slack reporter will no longer report all states of a Prow job if it has

Channelspecified on the Prow job config. Instead, it will report thejob_states_to_reportconfigured in the Prow job or in the Prow core config if the former does not exist. -

May 18, 2020

expiryfield has been replaced withcreated_atin the HMAC secret. -

April 24, 2020 Horologium now defaults to

--dry-run=true -

April 23, 2020 Explicitly setting

--config-pathis now required. -

April 23, 2020 Update the

autobumpimage to at leastv20200422-8c8546d74before June 2020. -

April 23, 2020 Deleted deprecated

default_decoration_config. -

April 22, 2020 Deleted the

file_weight_countblunderbuss option. -

April 16, 2020 The

docs-no-retestprow plugin has been deleted. The plugin was deprecated in January 2020. -

April 14, 2020 GitHub reporting via plank is deprecated, set –github-workers=1 on crier before July 2020.

-

March 27, 2020 The deprecated

allow_cancellationsoption has been removed from Plank and the Jenkins operator. -

March 19, 2020 The

rerun_auth_configconfig field has been deprecated in favor of the newrerun_auth_configsfield which allows configuration on a global, organization or repo level.rerun_auth_configwill be removed in July 2020. -

November 21, 2019 The boskos metrics component replaced the existing prometheus metrics with a single, label-qualified metric. Metrics are now served at

/metricson port 9090. This actually happened August 5th, but is being documented now. Details: https://github.com/kubernetes/test-infra/pull/13767 -

November 18, 2019 The

mkbuild-clustercommand-line utility andbuild-clusterformat is deprecated and will be removed in May 2020. Usegencredand thekubeconfigformat as an alternative. -

November 14, 2019 The

slack_reporterconfig field has been deprecated in favor of the newslack_reporter_configsfield which allows configuration on a global, organization or repo level.slack_reporterwill be removed in May 2020. -

November 7, 2019 The

plank.allow_cancellationsandjenkins_operators.allow_cancellationssettings are deprecated and will be removed and set to alwaystruein March 2020. -

October 7, 2019 Prow will drop support for the deprecated knative-builds in November 2019.

-

September 24, 2019 Sending an http

GETrequest to the/hookendpoint now returns a405(Method Not Allowed) instead of a200(OK). -

September 8, 2019 The deprecated

job_url_prefixoption has been removed from Plank. -

May 2, 2019 All components exposing Prometheus metrics will now either push them to the Prometheus PushGateway, if configured, or serve them locally on port 9090 at

/metrics, if not configured (the default). -

April 26, 2019

blunderbuss,approve, and other plugins that read OWNERS now treatowners_dir_blacklistas a list of regular expressions matched against the entire (repository-relative) directory path of the OWNERS file rather than as a list of strings matched exactly against the basename only of the directory containing the OWNERS file. -

April 2, 2019

hook,deck,horologium,tide,plankandsinkerwill no longer provide a default value for the--config-pathflag. It is required to explicitly provide--config-pathwhen upgrading to a new version of these components that were previously relying on the default--config-path=/etc/config/config.yaml. -

March 29, 2019 Custom logos should be provided as full paths in the configuration under

deck.branding.logosand will not implicitly be assumed to be under the static assets directory. -

February 26, 2019 The

job_url_prefixoption fromplankhas been deprecated in favor of the newjob_url_prefix_configoption which allows configuration on a global, organization or repo level.job_url_prefixwill be removed in September 2019. -

February 13, 2019

horologiumandsinkerdeployments will soon require--dry-run=falsein production, please set this before March 15. At that time flag will default to –dry-run=true instead of –dry-run=false. -

February 1, 2019 Now that

hookandtidewill no longer post “Skipped” statuses for jobs that do not need to run, it is not possible to require those statuses with branch protection. Therefore, it is necessary to run thebranchprotectorfrom at least version510db59before upgradingtideto that version. -

February 1, 2019

horologiumandsinkernow support the--dry-runflag, so you must pass--dry-run=falseto keep the previous behavior (see Feb 13 update). -

January 31, 2019

subno longer supports the--masterurlflag for connecting to the infrastructure cluster. Use--kubeconfigwith--contextfor this. -

January 31, 2019

crierno longer supports the--masterurlflag for connecting to the infrastructure cluster. Use--kubeconfigwith--contextfor this. -

January 27, 2019 Jobs that do not run will no longer post “Skipped” statuses.

-

January 27, 2019 Jobs that do not run always will no longer be required by branch protection as they will not always produce a status. They will continue to be required for merge by

tideif they are configured as required. -

January 27, 2019 All support for

run_after_successjobs has been removed. Configuration of these jobs will continue to parse but will ignore the field. -

January 27, 2019

hookwill now correctly honor therun_alwaysfield on Gerrit presubmits. Previously, if this field was unset it would have defaulted totrue; now, it will correctly default tofalse. -

January 22, 2019

sinkerprefers.kube/configinstead of the customClustersfile to specify credentials for external build clusters. The flag name has changed from--build-clusterto--kubeconfig. Migrate before June 2019. -

November 29, 2018

plankwill no longer default jobs withdecorate: trueto haveautomountServiceAccountToken: falsein their PodSpec if unset, if the job explicitly setsserviceAccountName -

November 26, 2018 job names must now match

^[A-Za-z0-9-._]+$. Jobs that did not match this before were allowed but did not provide a good user experience. -

November 15, 2018 the

hookservice account now requires RBAC privileges to createConfigMapsto support new functionality in theupdateconfigplugin. -

November 9, 2018 Prow gerrit client label/annotations now have a

prow.k8s.io/namespace prefix, if you have a gerrit deployment, please bump both cmd/gerrit and cmd/crier. -

November 8, 2018

planknow defaults jobs withdecorate: trueto haveautomountServiceAccountToken: falsein their PodSpec if unset. Jobs that used the default service account should explicitly set this field to maintain functionality. -

October 16, 2018 Prow tls-cert management has been migrated from kube-lego to cert-manager.

-

October 12, 2018 Removed deprecated

buildIdenvironment variable from prow jobs. UseBUILD_ID. -

October 3, 2018

-github-token-filereplaced with-github-token-pathfor consistency withbranchprotectorandperiboloswhich were already using-github-token-path.-github-token-filewill continue to work through the remainder of 2018, but it will be removed in early 2019. The following commands are affected:cherrypicker,hook,jenkins-operator,needs-rebase,phony,plank,refresh, andtide. -

October 1, 2018 bazel is the one official way to build container images. Please use prow/bump.sh and/or bazel run //prow:release-push

-

Sep 27, 2018 If you are setting explicit decorate configs, the format has changed from

- name: job-foo decorate: true timeout: 1to

- name: job-foo decorate: true decoration_config: timeout: 1 -

September 24, 2018 the

splicecomponent has been deleted following the deletion of mungegithub. -

July 9, 2018

milestoneformat has changed frommilestone: maintainers_id: <some_team_id> maintainers_team: <some_team_name>to

repo_milestonerepo_milestone: <some_repo_name>: maintainers_id: <some_team_id> maintainers_team: <some_team_name> -

July 2, 2018 the

triggerplugin will now trust PRs from repo collaborators. Useonly_org_members: truein the trigger config to temporarily disable this behavior. -

June 14, 2018 the

updateconfigplugin will only add data to yourConfigMapsusing the basename of the updated file, instead of using that and also duplicating the data using the name of theConfigMapas a key -

June 1, 2018 all unquoted

booleanfields in config.yaml that were unmarshall into typestringnow need to be quoted to avoid unmarshalling error. -

May 9, 2018

decklogs for jobs run asPodswill now return logs for the"test"container only. -

April 2, 2018

updateconfigformat has been changed frompath/to/some/other/thing: configNameto

path/to/some/other/thing: Name: configName # If unspecified, Namespace default to the value of ProwJobNamespace. Namespace: myNamespace -

March 15, 2018

jenkins_operatoris removed from the config in favor ofjenkins_operators. -

March 1, 2018

MilestoneStatushas been removed from the plugins Configuration in favor of theMilestonewhich is shared between two plugins: 1)milestonestatusand 2)milestone. The milestonestatus plugin now uses theMilestoneobject to get the maintainers team ID -

February 27, 2018

jenkins-operatordoes not use$BUILD_IDas a fallback to$PROW_JOB_IDanymore. -

February 15, 2018

jenkins-operatorcan now accept the--tot-urlflag and will use the connection tototto vend build identifiers asplankdoes, giving control over where in GCS artifacts land to Prow and away from Jenkins. Furthermore, the$BUILD_IDvariable in Jenkins jobs will now correctly point to the build identifier vended bytotand a new variable,$PROW_JOB_ID, points to the identifier used to link ProwJobs to Jenkins builds.$PROW_JOB_IDfallbacks to$BUILD_IDfor backwards-compatibility, ie. to not break in-flight jobs during the time of the jenkins-operator update. -

February 1, 2018 The

config_updatersection inplugins.yamlnow uses amapsobject instead ofconfig_file,plugin_filestrings. Please switch over before July. -

November 30, 2017 If you use tide, you’ll need to switch your query format and bump all prow component versions to reflect the changes in #5754.

-

November 14, 2017

horologium:0.17fixes cron job not being scheduled. -

November 10, 2017 If you want to use cron feature in prow, you need to bump to:

hook:0.181,sinker:0.23,deck:0.62,splice:0.32,horologium:0.15plank:0.60,jenkins-operator:0.57andtide:0.12to avoid error spamming from the config parser. -

November 7, 2017

plank:0.56fixes bug introduced inplank:0.53that affects controllers using an empty kubernetes selector. -

November 7, 2017

jenkins-operator:0.51provides jobs with the$BUILD_IDvariable as well as the$buildIdvariable. The latter is deprecated and will be removed in a future version. -

November 6, 2017

plank:0.55providesPodswith the$BUILD_IDvariable as well as the$BUILD_NUMBERvariable. The latter is deprecated and will be removed in a future version. -

November 3, 2017 Added

EmptyDirvolume type. To update tohook:0.176+orhorologium:0.11+the following components must have the associated minimum versions:deck:0.58+,plank:0.54+,jenkins-operator:0.50+. -

November 2, 2017

plank:0.53changes thetypelabel key toprow.k8s.io/typeand thejobannotation key toprow.k8s.io/jobadded in pods. -

October 14, 2017

deck:0:53+needs to be updated in conjunction withjenkins-operator:0:48+since Jenkins logs are now exposed from the operator anddeckneeds to use theexternal_agent_logsoption in order to redirect requests to the locationjenkins-operatorexposes logs. -

October 13, 2017

hook:0.174,plank:0.50, andjenkins-operator:0.47drop the deprecatedgithub-bot-nameflag. -

October 2, 2017

hook:0.171: The label plugin was split into three plugins (label, sigmention, milestonestatus). Breaking changes:- The configuration key for the milestone maintainer team’s ID has been

changed. Previously the team ID was stored in the plugins config at key

label»milestone_maintainers_id. Now that the milestone status labels are handled in themilestonestatusplugin instead of thelabelplugin, the team ID is stored at keymilestonestatus»maintainers_id. - The sigmention and milestonestatus plugins must be enabled on any repos that require them since their functionality is no longer included in the label plugin.

- The configuration key for the milestone maintainer team’s ID has been

changed. Previously the team ID was stored in the plugins config at key

-

September 3, 2017

sinker:0.17now deletes pods labeled byplank:0.42in order to avoid cleaning up unrelated pods that happen to be found in the same namespace prow runs pods. If you run other pods in the same namespace, you will have to manually delete or label the prow-owned pods, otherwise you can bulk-label all of them with the following command and let sinker collect them normally:kubectl label pods --all -n pod_namespace created-by-prow=true -

September 1, 2017

deck:0.44andjenkins-operator:0.41controllers no longer provide a default value for the--jenkins-token-fileflag. Cluster administrators should provide--jenkins-token-file=/etc/jenkins/jenkinsexplicitly when upgrading to a new version of these components if they were previously relying on the default. For more context, please see this pull request. -

August 29, 2017 Configuration specific to plugins is now held in the

pluginsConfigMapand serialized in this repo in theplugins.yamlfile. Cluster administrators upgrading tohook:0.148or newer should move plugin configuration from the mainConfigMap. For more context, please see this pull request.

Project updates

- October 28, 2022 Documentation migration: existing Markdown files in k/t-i have been migrated over to https://docs.prow.k8s.io/docs/legacy-snapshot. The old locations now have “tombstones” to redirect to the new location. See https://github.com/kubernetes/test-infra/pull/27818 for details.

2 - Overview

Prow is a Kubernetes based CI/CD system. Jobs can be triggered by various types of events and report their status to many different services. In addition to job execution, Prow provides GitHub automation in the form of policy enforcement, chat-ops via /foo style commands, and automatic PR merging.

See the GoDoc for library docs. Please note that these libraries are intended for use by prow only, and we do not make any attempt to preserve backwards compatibility.

For a brief overview of how Prow runs jobs take a look at “Life of a Prow Job”.

To see common Prow usage and interactions flow, see the pull request interactions sequence diagram.

Functions and Features

- Job execution for testing, batch processing, artifact publishing.

- GitHub events are used to trigger post-PR-merge (postsubmit) jobs and on-PR-update (presubmit) jobs.

- Support for multiple execution platforms and source code review sites.

- Pluggable GitHub bot automation that implements

/foostyle commands and enforces configured policies/processes. - GitHub merge automation with batch testing logic.

- Front end for viewing jobs, merge queue status, dynamically generated help information, and more.

- Automatic deployment of source control based config.

- Automatic GitHub org/repo administration configured in source control.

- Designed for multi-org scale with dozens of repositories. (The Kubernetes Prow instance uses only 1 GitHub bot token!)

- High availability as benefit of running on Kubernetes. (replication, load balancing, rolling updates…)

- JSON structured logs.

- Prometheus metrics.

Documentation

Getting started

- With your own Prow deployment: “Deploying Prow”

- With developing for Prow: “Developing and Contributing to Prow”

- As a job author: ProwJobs

More details

Tests

The stability of prow is heavily relying on unit tests and integration tests.

- Unit tests are co-located with prow source code

- Integration tests utilizes kind with hermetic integration tests. See instructions for adding new integration tests for more details

Useful Talks

KubeCon 2020 EU virtual

KubeCon 2018 EU

KubeCon 2018 China

KubeCon 2018 Seattle

- Behind you PR: K8s with K8s on K8s

- Using Prow for Testing Outside of K8s

- Jenkins X (featuring Tide)

- SIG Testing Intro

- SIG Testing Deep Dive

Misc

Prow in the wild

Prow is used by the following organizations and projects:

- Kubernetes

- This includes kubernetes, kubernetes-client, kubernetes-csi, and kubernetes-sigs.

- OpenShift

- This includes openshift, openshift-s2i, operator-framework, and some repos in containers and heketi.

- Istio

- Knative

- Jetstack

- Metal³

- Caicloud

- Kubeflow

- Azure AKS Engine

- tensorflow/minigo

- Daisy (Google Compute Image Tools)

- KubeEdge (Kubernetes Native Edge Computing Framework)

- Volcano (Kubernetes Native Batch System)

- Loodse

- Feast

- Falco

- TiDB

- Amazon EKS Distro and Amazon EKS Anywhere

- KubeSphere

- OpenYurt

- KubeVirt

- AWS Controllers for Kubernetes

- Gardener

Jenkins X uses Prow as part of Serverless Jenkins.

Contact us

If you need to contact the maintainers of Prow you have a few options:

- Open an issue in the kubernetes-sigs/prow repo.

- Reach out to the

#prowchannel of the Kubernetes Slack. - Contact one of the code owners in OWNERS or in a more specifically scoped OWNERS file.

Bots home

@k8s-ci-robot lives here and is the face of the Kubernetes Prow instance. Here is a command list for interacting with @k8s-ci-robot and other Prow bots.

2.1 - Architecture

Prow in a Nutshell

Prow creates jobs based on various types of events, such as:

-

GitHub events (e.g., a new PR is created, or is merged, or a person comments “/retest” on a PR),

-

Pub/Sub messages,

-

time (these are created by Horologium and are called periodic jobs), and

-

retesting (triggered by Tide).

Jobs are created inside the Service Cluster as Kubernetes Custom Resources. The Prow Controller Manager takes triggered jobs and schedules them into a build cluster, where they run as Kubernetes pods. Crier then reports the results back to GitHub.

flowchart TD

classDef yellow fill:#ff0

classDef cyan fill:#0ff

classDef pink fill:#f99

subgraph Service Cluster["<span style='font-size: 40px;'><b>Service Cluster</b></span>"]

Deck:::cyan

Prowjobs:::yellow

Crier:::cyan

Tide:::cyan

Horologium:::cyan

Sinker:::cyan

PCM[Prow Controller Manager]:::cyan

Hook:::cyan

subgraph Hook

WebhookHandler["Webhook Handler"]

PluginCat(["'cat' plugin"])

PluginTrigger(["'trigger' plugin"])

end

end

subgraph Build Cluster[<b>Build Cluster</b>]

Pods[(Pods)]:::yellow

end

style Legend fill:#fff,stroke:#000,stroke-width:4px

subgraph Legend["<span style='font-size: 20px;'><b>LEGEND</b></span>"]

direction LR

k8sResource[Kubernetes Resource]:::yellow

prowComponent[Prow Component]:::cyan

hookPlugin([Hook Plugin])

Other

end

Prowjobs <-.-> Deck <-----> |Serve| prow.k8s.io

GitHub ==> |Webhooks| WebhookHandler

WebhookHandler --> |/meow| PluginCat

WebhookHandler --> |/retest| PluginTrigger

Prowjobs <-.-> Tide --> |Retest and Merge| GitHub

Horologium ---> |Create| Prowjobs

PluginCat --> |Comment| GitHub

PluginTrigger --> |Create| Prowjobs

Sinker ---> |Garbage collect| Prowjobs

Sinker --> |Garbage collect| Pods

PCM -.-> |List and update| Prowjobs

PCM ---> |Report| Prowjobs

PCM ==> |Create and Query| Pods

Prowjobs <-.-> |Inform| Crier --> |Report| GitHub

Notes

Note that Prow can also work with Gerrit, albeit with less features. Specifically, neither Tide nor Hook work with Gerrit yet.

3 - Components

Prow Images

This directory includes a sub directory for every Prow component and is where all binary and container images are built. You can find the main packages here. For details about building the binaries and images see “Building, Testing, and Updating Prow”.

Cluster Components

Prow has a microservice architecture implemented as a collection of container images that run as Kubernetes deployments. A brief description of each service component is provided here.

Core Components

crier(doc, code) reports on ProwJob status changes. Can be configured to report to gerrit, github, pubsub, slack, etc.deck(doc, code) presents a nice view of recent jobs, command and plugin help information, the current status and history of merge automation, and a dashboard for PR authors.hook(doc, code) is the most important piece. It is a stateless server that listens for GitHub webhooks and dispatches them to the appropriate plugins. Hook’s plugins are used to trigger jobs, implement ‘slash’ commands, post to Slack, and more. See the plugins doc and code directory for more information on plugins.horologium(doc, code) triggers periodic jobs when necessary.prow-controller-manager(doc, code) manages the job execution and lifecycle for jobs that run in k8s pods. It currently acts as a replacement forplanksinker(doc, code) cleans up old jobs and pods.

Merge Automation

tide(doc, code) manages retesting and merging PRs once they meet the configured merge criteria. See its README for more information.

Optional Components

branchprotector(doc, code) configures github branch protection according to a specified policyexporter(doc, code) exposes metrics about ProwJobs not directly related to a specific Prow componentgcsupload(doc, code)gerrit(doc, code) is a Prow-gerrit adapter for handling CI on gerrit workflowshmac(doc, code) updates HMAC tokens, GitHub webhooks and HMAC secrets for the orgs/repos specified in the Prow config filejenkins-operator(doc, code) is the controller that manages jobs that run on Jenkins. We moved away from using this component in favor of running all jobs on Kubernetes.tot(doc, code) vends sequential build numbers. Tot is only necessary for integration with automation that expects sequential build numbers. If Tot is not used, Prow automatically generates build numbers that are monotonically increasing, but not sequential.status-reconciler(doc, code) ensures changes to blocking presubmits in Prow configuration does not cause in-flight GitHub PRs to get stucksub(doc, code) listen to Cloud Pub/Sub notification to trigger Prow Jobs.

CLI Tools

checkconfig(doc, code) loads and verifies the configuration, useful as a pre-submit.config-bootstrapper(doc, code) bootstraps a configuration that would be incrementally updated by theupdateconfigProw plugingeneric-autobumper(doc, code) automates image version upgrades (e.g. for a Prow deployment) by opening a PR with images changed to their latest version according to a config file.invitations-accepter(doc, code) approves all pending GitHub repository invitationsmkpj(doc, code) createsProwJobsusing Prow configuration.mkpod(doc, code) createsPodsfromProwJobs.peribolos(doc, code) manages GitHub org, team and membership settings according to a config file. Used by kubernetes/orgphony(doc, code) sends fake webhooks for testing hook and plugins.

Pod Utilities

These are small tools that are automatically added to ProwJob pods for jobs that request pod decoration. They are used to transparently provide source code cloning and upload of metadata, logs, and job artifacts to persistent storage. See their README for more information.

Base Images

The container images in images are used as base images for Prow components.

TODO: undocumented

Deprecated

cm2kc(doc, code) is a CLI tool used to convert a clustermap file to a kubeconfig file. Deprecated because we have moved away from clustermaps; you should usegencredto generate a kubeconfig file directly.grandmatriarchphaino(doc) runs an approximation of a ProwJob on your local workstationtackle(doc, code)

3.1 - Core Components

3.1.1 - Crier

Crier reports your prowjobs on their status changes.

Usage / How to enable existing available reporters

For any reporter you want to use, you need to mount your prow configs and specify --config-path and job-config-path

flag as most of other prow controllers do.

Gerrit reporter

You can enable gerrit reporter in crier by specifying --gerrit-workers=n flag.

Similar to the gerrit adapter, you’ll need to specify --gerrit-projects for

your gerrit projects, and also --cookiefile for the gerrit auth token (leave it unset for anonymous).

Gerrit reporter will send an aggregated summary message, when all gerrit adapter scheduled prowjobs with the same report label finish on a revision. It will also attach a report url so people can find logs of the job.

The reporter will also cast a +1/-1 vote on the prow.k8s.io/gerrit-report-label label of your prowjob,

or by default it will vote on CodeReview label. Where +1 means all jobs on the patshset pass and -1

means one or more jobs failed on the patchset.

Pubsub reporter

You can enable pubsub reporter in crier by specifying --pubsub-workers=n flag.

You need to specify following labels in order for pubsub reporter to report your prowjob:

| Label | Description |

|---|---|

"prow.k8s.io/pubsub.project" |

Your gcp project where pubsub channel lives |

"prow.k8s.io/pubsub.topic" |

The topic of your pubsub message |

"prow.k8s.io/pubsub.runID" |

A user assigned job id. It’s tied to the prowjob, serves as a name tag and help user to differentiate results in multiple pubsub messages |

The service account used by crier will need to have pubsub.topics.publish permission in the project where pubsub channel lives, e.g. by assigning the roles/pubsub.publisher IAM role

Pubsub reporter will report whenever prowjob has a state transition.

You can check the reported result by list the pubsub topic.

GitHub reporter

You can enable github reporter in crier by specifying --github-workers=N flag (N>0).

You also need to mount a github oauth token by specifying --github-token-path flag, which defaults to /etc/github/oauth.

If you have a ghproxy deployed, also remember to point --github-endpoint to your ghproxy to avoid token throttle.

The actual report logic is in the github report library for your reference.

Slack reporter

NOTE: if enabling the slack reporter for the first time, Crier will message to the Slack channel for all ProwJobs matching the configured filtering criteria.

You can enable the Slack reporter in crier by specifying the --slack-workers=n and --slack-token-file=path-to-tokenfile flags.

The --slack-token-file flag takes a path to a file containing a Slack OAuth Access Token.

The OAuth Access Token can be obtained as follows:

- Navigate to: https://api.slack.com/apps.

- Click Create New App.

- Provide an App Name (e.g. Prow Slack Reporter) and Development Slack Workspace (e.g. Kubernetes).

- Click Permissions.

- Add the

chat:write.publicscope using the Scopes / Bot Token Scopes dropdown and Save Changes. - Click Install App to Workspace

- Click Allow to authorize the Oauth scopes.

- Copy the OAuth Access Token.

Once the access token is obtained, you can create a secret in the cluster using that value:

kubectl create secret generic slack-token --from-literal=token=< access token >

Furthermore, to make this token available to Crier, mount the slack-token secret using a volume and set the --slack-token-file flag in the deployment spec.

apiVersion: apps/v1

kind: Deployment

metadata:

name: crier

labels:

app: crier

spec:

selector:

matchLabels:

app: crier

template:

metadata:

labels:

app: crier

spec:

containers:

- name: crier

image: gcr.io/k8s-prow/crier:v20200205-656133e91

args:

- --slack-workers=1

- --slack-token-file=/etc/slack/token

- --config-path=/etc/config/config.yaml

- --dry-run=false

volumeMounts:

- mountPath: /etc/config

name: config

readOnly: true

- name: slack

mountPath: /etc/slack

readOnly: true

volumes:

- name: slack

secret:

secretName: slack-token

- name: config

configMap:

name: config

Additionally, in order for it to work with Prow you must add the following to your config.yaml:

NOTE:

slack_reporter_configsis a map oforg,org/repo, or*(i.e. catch-all wildcard) to a set of slack reporter configs.

slack_reporter_configs:

# Wildcard (i.e. catch-all) slack config

"*":

# default: None

job_types_to_report:

- presubmit

- postsubmit

# default: None

job_states_to_report:

- failure

- error

# required

channel: my-slack-channel

# The template shown below is the default

report_template: "Job {{.Spec.Job}} of type {{.Spec.Type}} ended with state {{.Status.State}}. <{{.Status.URL}}|View logs>"

# "org/repo" slack config

istio/proxy:

job_types_to_report:

- presubmit

job_states_to_report:

- error

channel: istio-proxy-channel

# "org" slack config

istio:

job_types_to_report:

- periodic

job_states_to_report:

- failure

channel: istio-channel

The channel, job_states_to_report and report_template can be overridden at the ProwJob level via the reporter_config.slack field:

postsubmits:

some-org/some-repo:

- name: example-job

decorate: true

reporter_config:

slack:

channel: 'override-channel-name'

job_states_to_report:

- success

report_template: "Overridden template for job {{.Spec.Job}}"

spec:

containers:

- image: alpine

command:

- echo

To silence notifications at the ProwJob level you can pass an empty slice to reporter_config.slack.job_states_to_report:

postsubmits:

some-org/some-repo:

- name: example-job

decorate: true

reporter_config:

slack:

job_states_to_report: []

spec:

containers:

- image: alpine

command:

- echo

Implementation details

Crier supports multiple reporters, each reporter will become a crier controller. Controllers

will get prowjob change notifications from a shared informer, and you can specify --num-workers to change parallelism.

If you are interested in how client-go works under the hood, the details are explained in this doc

Adding a new reporter

Each crier controller takes in a reporter.

Each reporter will implement the following interface:

type reportClient interface {

Report(pj *v1.ProwJob) error

GetName() string

ShouldReport(pj *v1.ProwJob) bool

}

GetName will return the name of your reporter, the name will be used as a key when we store previous

reported state for each prowjob.

ShouldReport will return if a prowjob should be handled by current reporter.

Report is the actual report logic happens. Return nil means report is successful, and the reported

state will be saved in the prowjob. Return an actual error if report fails, crier will re-add the prowjob

key to the shared cache and retry up to 5 times.

You can add a reporter that implements the above interface, and add a flag to turn it on/off in crier.

Migration from plank for github report

Both plank and crier will call into the github report lib when a prowjob needs to be reported, so as a user you only want to make one of them to report :-)

To disable GitHub reporting in Plank, add the --skip-report=true flag to the Plank deployment.

Before migrating, be sure plank is setting the PrevReportStates field by describing a finished presubmit prowjob. Plank started to set this field after commit 2118178, if not, you want to upgrade your plank to a version includes this commit before moving forward.

you can check this entry by:

$ kubectl get prowjobs -o jsonpath='{range .items[*]}{.metadata.name}{"\t"}{.status.prev_report_states.github-reporter}{"\n"}'

...

fafec9e1-3af2-11e9-ad1a-0a580a6c0d12 failure

fb027a97-3af2-11e9-ad1a-0a580a6c0d12 success

fb0499d3-3af2-11e9-ad1a-0a580a6c0d12 failure

fb05935f-3b2b-11e9-ad1a-0a580a6c0d12 success

fb05e1f1-3af2-11e9-ad1a-0a580a6c0d12 error

fb06c55c-3af2-11e9-ad1a-0a580a6c0d12 success

fb09e7d8-3abb-11e9-816a-0a580a6c0f7f success

You want to add a crier deployment, similar to ours config/prow/cluster/crier_deployment.yaml, flags need to be specified:

- point

config-pathand--job-config-pathto your prow config and job configs accordingly. - Set

--github-workerto be number of parallel github reporting threads you need - Point

--github-endpointto ghproxy, if you have set that for plank - Bind github oauth token as a secret and set

--github-token-pathif you’ve have that set for plank.

In your plank deployment, you can

- Remove the

--github-endpointflags - Remove the github oauth secret, and

--github-token-pathflag if set - Flip on

--skip-report, so plank will skip the reporting logic

Both change should be deployed at the same time, if have an order preference, deploy crier first since report twice should just be a no-op.

We will send out an announcement when we cleaning up the report dependency from plank in later 2019.

3.1.2 - Deck

Running Deck locally

Deck can be run locally by executing ./cmd/deck/runlocal. The scripts starts Deck via

Bazel using:

- pre-generated data (extracted from a running Prow instance)

- the local

config.yaml - the local static files, template files and lenses

Open your browser and go to: http://localhost:8080

Debugging via Intellij / VSCode

This section describes how to debug Deck locally by running it inside VSCode or Intellij.

# Prepare assets

make build-tarball PROW_IMAGE=cmd/deck

mkdir -p /tmp/deck

tar -xvf ./_bin/deck.tar -C /tmp/deck

cd /tmp/deck

# Expand all layers

for tar in *.tar.gz; do tar -xvf $tar; done

# Start Deck via go or in your IDE with the following arguments:

--config-path=./config/prow/config.yaml

--job-config-path=./config/jobs

--hook-url=http://prow.k8s.io

--spyglass

--template-files-location=/tmp/deck/var/run/ko/template

--static-files-location=/tmp/deck/var/run/ko/static

--spyglass-files-location=/tmp/deck/var/run/ko/lenses

Rerun Prow Job via Prow UI

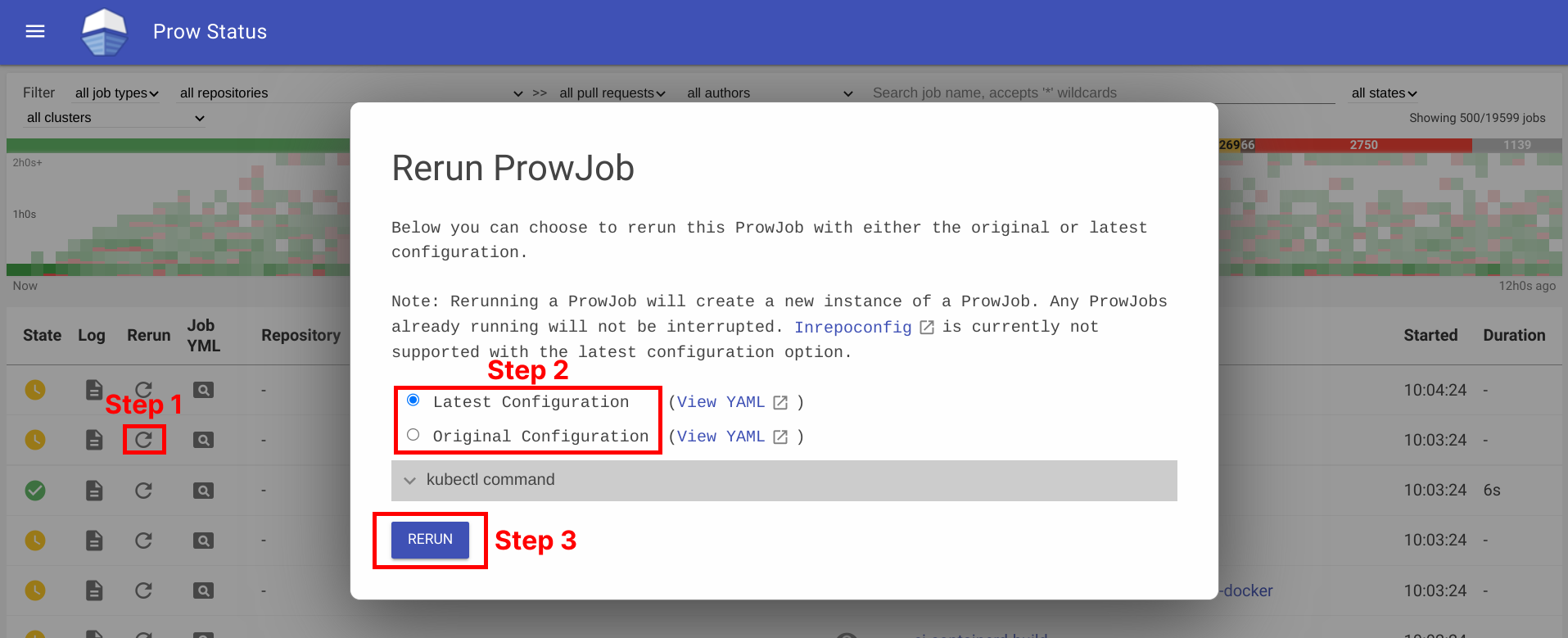

Rerun prow job can be done by visiting prow UI, locate prow job and rerun job by clicking on the ↻ button, selecting a configuration option, and then clicking Rerun button. For prow on github, the permission is controlled by github membership, and configured as part of deck configuration, see rerun_auth_configs for k8s prow.

See example below:

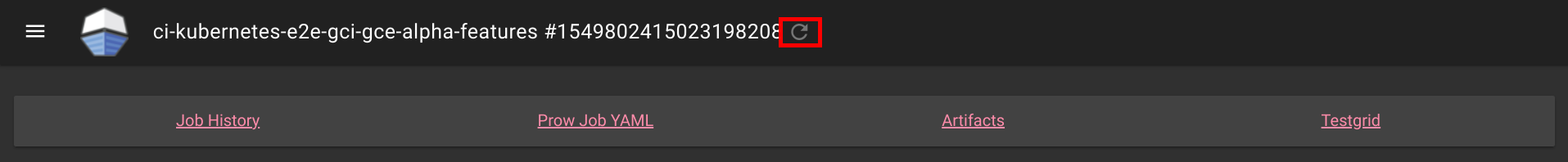

Rerunning can also be done on Spyglass:

This is also available for non github prow if the frontend is secured and allow_anyone is set to true for the job.

Abort Prow Job via Prow UI

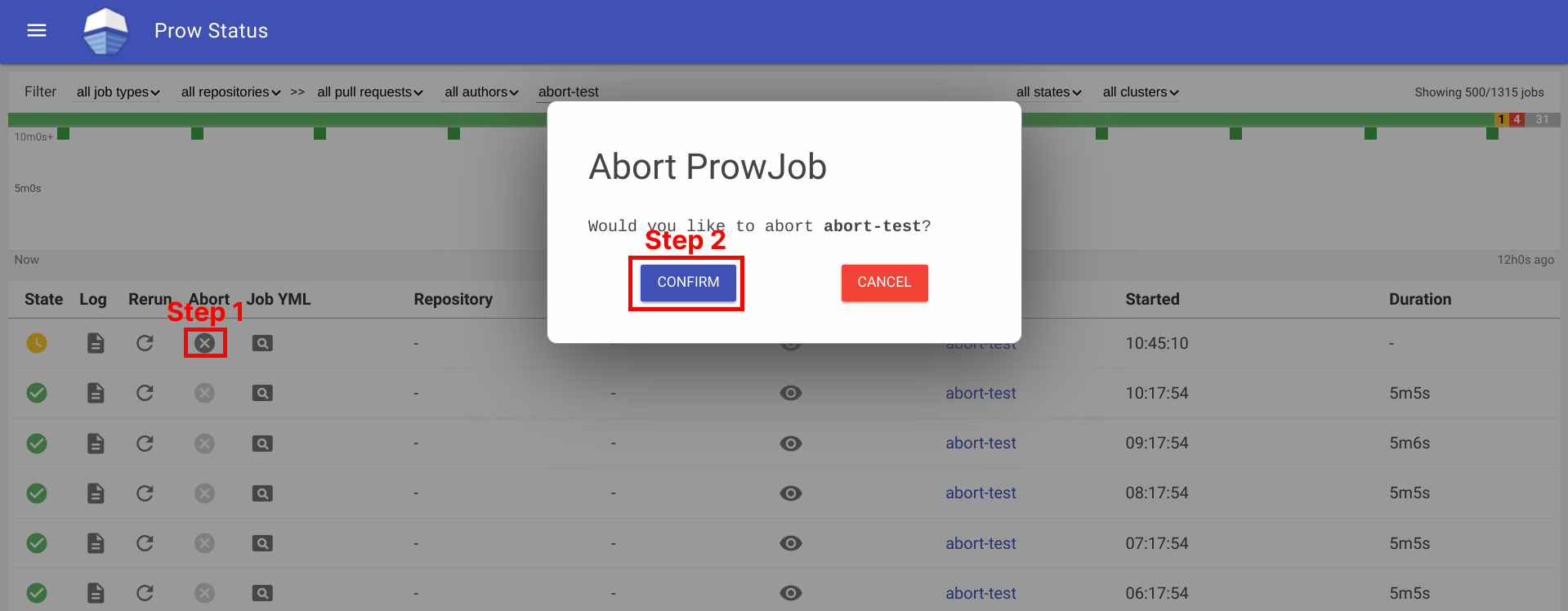

Aborting a prow job can be done by visiting the prow UI, locate the prow job and abort the job by clicking on the ✕ button, and then clicking Confirm button. For prow on github, the permission is controlled by github membership, and configured as part of deck configuration, see rerun_auth_configs for k8s prow. Note, the abort functionality uses the same field as rerun for permissions.

See example below:

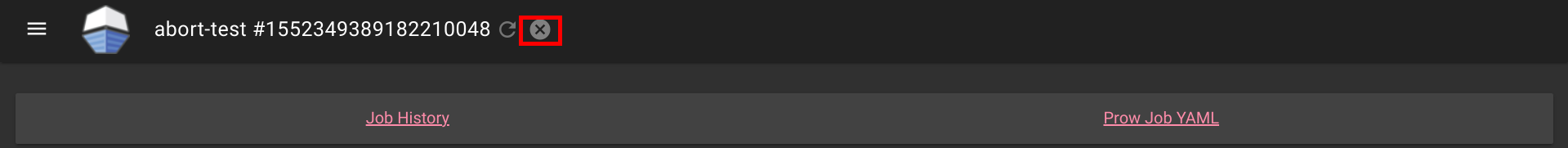

Aborting can also be done on Spyglass:

This is also available for non github prow if the frontend is secured and allow_anyone is set to true for the job.

3.1.2.1 - How to setup GitHub Oauth

This document helps configure GitHub Oauth, which is required for PR Status and for the rerun button on Prow Status. If OAuth is configured, Prow will perform GitHub actions on behalf of the authenticated users. This is necessary to fetch information about pull requests for the PR Status page and to authenticate users when checking if they have permission to rerun jobs via the rerun button on Prow Status.

Set up secrets

The following steps will show you how to set up an OAuth app.

-

Create your GitHub Oauth application

https://developer.github.com/apps/building-oauth-apps/creating-an-oauth-app/

Make sure to create a GitHub Oauth App and not a regular GitHub App.

The callback url should be:

<PROW_BASE_URL>/github-login/redirect -

Create a secret file for GitHub OAuth that has the following content. The information can be found in the GitHub OAuth developer settings:

client_id: <APP_CLIENT_ID> client_secret: <APP_CLIENT_SECRET> redirect_url: <PROW_BASE_URL>/github-login/redirect final_redirect_url: <PROW_BASE_URL>/prIf Prow is expected to work with private repositories, add

scopes: - repo -

Create another secret file for the cookie store. This cookie secret will also be used for CSRF protection. The file should contain a random 32-byte length base64 key. For example, you can use

opensslto generate the keyopenssl rand -out cookie.txt -base64 32 -

Use

kubectl, which should already point to your Prow cluster, to create secrets using the command:kubectl create secret generic github-oauth-config --from-file=secret=<PATH_TO_YOUR_GITHUB_SECRET>kubectl create secret generic cookie --from-file=secret=<PATH_TO_YOUR_COOKIE_KEY_SECRET> -

To use the secrets, you can either:

-

Mount secrets to your deck volume:

Open

test-infra/config/prow/cluster/deck_deployment.yaml. Undervolumestoken, add:- name: oauth-config secret: secretName: github-oauth-config - name: cookie-secret secret: secretName: cookieUnder

volumeMountstoken, add:- name: oauth-config mountPath: /etc/githuboauth readOnly: true - name: cookie-secret mountPath: /etc/cookie readOnly: true -

Add the following flags to

deck:- --github-oauth-config-file=/etc/githuboauth/secret - --oauth-url=/github-login - --cookie-secret=/etc/cookie/secretNote that the

--oauth-urlshould eventually be changed to a boolean as described in #13804. -

You can also set your own path to the cookie secret using the

--cookie-secretflag. -

To prevent

deckfrom making mutating GitHub API calls, pass in the--dry-runflag.

-

Using A GitHub bot

The rerun button can be configured so that certain GitHub teams are allowed to trigger certain jobs

from the frontend. In order to make API calls to determine whether a user is on a given team, deck needs

to use the access token of an org member.

If not, you can create a new GitHub account, make it an org member, and set up a personal access token here.

Then create the access token secret:

kubectl create secret generic oauth-token --from-file=secret=<PATH_TO_ACCESS_TOKEN>

Add the following to volumes and volumeMounts:

volumeMounts:

- name: oauth-token

mountPath: /etc/github

readOnly: true

volumes:

- name: oauth-token

secret:

secretName: oauth-token

Pass the file path to deck as a flag:

--github-token-path=/etc/github/oauth

You can optionally use ghproxy to reduce token usage.

Run PR Status endpoint locally

Firstly, you will need a GitHub OAuth app. Please visit step 1 - 3 above.

When testing locally, pass the path to your secrets to deck using the --github-oauth-config-file and --cookie-secret flags.

Run the command:

go build . && ./deck --config-path=../../../config/prow/config.yaml --github-oauth-config-file=<PATH_TO_YOUR_GITHUB_OAUTH_SECRET> --cookie-secret=<PATH_TO_YOUR_COOKIE_SECRET> --oauth-url=/pr

Using a test cluster

If hosting your test instance on http instead of https, you will need to use the --allow-insecure flag in deck.

3.1.2.2 - CSRF attacks

In Deck, we make a number of POST requests that require user authentication. These requests are susceptible

to cross site request forgery (CSRF) attacks,

in which a malicious actor tricks an already authenticated user into submitting a form to one of these endpoints

and performing one of these protected actions on their behalf.

Protection

If --cookie-secret is 32 or more bytes long, CSRF protection is automatically enabled.

If --rerun-creates-job is specified, CSRF protection is required, and accordingly,

--cookie-secret must be 32 bytes long.

We protect against CSRF attacks using the gorilla CSRF library, implemented

in #13323. Broadly, this protection works by ensuring that

any POST request originates from our site, rather than from an outside link.

We do so by requiring that every POST request made to Deck includes a secret token either in the request header

or in the form itself as a hidden input.

We cryptographically generate the CSRF token using the --cookie-secret and a user session value and

include it as a header in every POST request made from Deck.

If you are adding a new POST request, you must include the CSRF token as described in the gorilla

documentation.

The gorilla library expects a 32-byte CSRF token. If --cookie-secret is sufficiently long,

direct job reruns will be enabled via the /rerun endpoint. Otherwise, if --cookie-secret is less

than 32 bytes and --rerun-creates-job is enabled, Deck will refuse to start. Longer values will

work but should be truncated.

By default, gorilla CSRF requires that all POST requests are made over HTTPS. If developing locally

over HTTP, you must specify --allow-insecure to Deck, which will configure both gorilla CSRF

and GitHub oauth to allow HTTP requests.

CSRF can also be executed by tricking a user into making a state-mutating GET request. All

state-mutating requests must therefore be POST requests, as gorilla CSRF does not secure GET

requests.

3.1.3 - Hook

This is a placeholder page. Some contents needs to be filled.

3.1.4 - Horologium

Horologium is the Prow component that creates ProwJobs for configured

periodic jobs. It watches existing ProwJobs, evaluates each periodic job’s

schedule, and creates a new ProwJob when the previous run is complete and the

next run is due.

How Horologium Schedules Jobs

Horologium supports two scheduling modes for periodic jobs:

interval: run after the configured duration has elapsed since the previous run. Whenminimum_intervalis set, the interval is measured from the previous run’s completion time.cron: run when the configured cron schedule fires.

Horologium retains the latest ProwJob for each active periodic job so it can determine whether the next run should start. Sinker uses the same latest-run information to avoid garbage-collecting the latest active periodic ProwJob.

If a periodic job config includes retry settings, Horologium can also create retry ProwJobs until the configured attempt limit is reached.

Configuration

Periodic jobs are configured in the Prow job configuration:

periodics:

- name: ci-example-periodic

interval: 1h

decorate: true

spec:

containers:

- image: alpine

command:

- /bin/sh

args:

- -c

- echo "hello from horologium"

Horologium itself reads the horologium section of the main Prow configuration.

The main component-specific option is tick_interval, which controls how often

Horologium checks whether new periodic jobs need to be created:

horologium:

tick_interval: 1m

If tick_interval is unset, Horologium checks every minute.

Required Access

Horologium needs access to the infrastructure cluster to list, watch, and create ProwJobs in the configured ProwJob namespace.

CLI Flags

Horologium uses the standard Prow config, Kubernetes, controller-manager, and instrumentation flags. The most commonly used flags are:

--config-path: Path to the main Prow configuration.--job-config-path: Path to the job configuration.--kubeconfig: Path to the kubeconfig for the infrastructure cluster.--dry-run: Controls whether Horologium creates ProwJobs. The default istrue; production deployments must set--dry-run=false.

Horologium exposes Prometheus metrics under the horologium component name.

3.1.5 - Prow-Controller-Manager

prow-controller-manager manages the job execution and lifecycle for jobs running in k8s.

It currently acts as a replacement for Plank.

It is intended to eventually replace other components, such as Sinker and Crier. See the tracking issue #17024 for details.

Advantages

- Eventbased rather than cronbased, hence reacting much faster to changes in prowjobs or pods

- Per-Prowjob retrying, meaning genuinely broken prowjobs will not be retried forever and transient errors will be retried much quicker

- Uses a cache for the build cluster rather than doing a LIST every 30 seconds, reducing the load on the build clusters api server

Exclusion with other components

This is mutually exclusive with only Plank. Only one of them may have more than zero replicas at the same time.

Usage

$ go run ./cmd/prow-controller-manager --help

Configuration

3.1.6 - Sinker

This is a placeholder page. Some contents needs to be filled.

3.1.7 - Tide

Tide is a Prow component for managing a pool of GitHub PRs that match a given set of criteria. It will automatically retest PRs that meet the criteria (“tide comes in”) and automatically merge them when they have up-to-date passing test results (“tide goes out”).

Documentation

Features

- Automatically runs batch tests and merges multiple PRs together whenever possible.

- Ensures that PRs are tested against the most recent base branch commit before they are allowed to merge.

- Maintains a GitHub status context that indicates if each PR is in a pool or what requirements are missing.

- Supports blocking merge to individual branches or whole repos using specifically labelled GitHub issues.

- Exposes Prometheus metrics.

- Supports repos that have ‘optional’ status contexts that shouldn’t be required for merge.

- Serves live data about current pools and a history of actions which can be consumed by Deck to populate the Tide dashboard, the PR dashboard, and the Tide history page.

- Scales efficiently so that a single instance with a single bot token can provide merge automation to dozens of orgs and repos with unique merge criteria. Every distinct ‘org/repo:branch’ combination defines a disjoint merge pool so that merges only affect other PRs in the same branch.

- Provides configurable merge modes (‘merge’, ‘squash’, or ‘rebase’).

History

Tide was created in 2017 by @spxtr to replace mungegithub’s Submit Queue. It was designed to manage a large number of repositories across organizations without using many API rate limit tokens by identifying mergeable PRs with GitHub search queries fulfilled by GitHub’s v4 GraphQL API.

Flowchart

graph TD;

subgraph github[GitHub]

subgraph org/repo/branch

head-ref[HEAD ref];

pullrequest[Pull Request];

status-context[Status Context];

end

end

subgraph prow-cluster

prowjobs[Prowjobs];

config.yaml;

end

subgraph tide-workflow

Tide;

pools;

divided-pools;

pools-->|dividePool|divided-pools;

filtered-pools;

subgraph syncSubpool

pool-i;

pool-n;

pool-n1;

accumulated-batch-prowjobs-->|filter out <br> incorrect refs <br> no longer meet merge requirement|valid-batches;

valid-batches-->accumulated-batch-success;

valid-batches-->accumulated-batch-pending;

status-context-->|fake prowjob from context|filtered-prowjobs;

filtered-prowjobs-->|accumulate|map_context_best-result;

map_context_best-result-->map_pr_overall-results;

map_pr_overall-results-->accumulated-success;

map_pr_overall-results-->accumulated-pending;

map_pr_overall-results-->accumulated-stale;

subgraph all-accumulated-pools

accumulated-batch-success;

accumulated-batch-pending;

accumulated-success;

accumulated-pending;

accumulated-stale;

end

accumulated-batch-success-..->accumulated-batch-success-exist{Exist};

accumulated-batch-pending-..->accumulated-batch-pending-exist{Exist};

accumulated-success-..->accumulated-success-exist{Exist};

accumulated-pending-..->accumulated-pending-exist{Exist};

accumulated-stale-..->accumulated-stale-exist{Exist};

pool-i-..->require-presubmits{Require Presubmits};

accumulated-batch-success-exist-->|yes|merge-batch[Merge batch];

merge-batch-->|Merge Pullrequests|pullrequest;

accumulated-batch-success-exist-->|no|accumulated-batch-pending-exist;

accumulated-batch-pending-exist-->|no|accumulated-success-exist;

accumulated-success-exist-->|yes|merge-single[Merge Single];

merge-single-->|Merge Pullrequests|pullrequest;

require-presubmits-->|no|wait;

accumulated-success-exist-->|no|require-presubmits;

require-presubmits-->|yes|accumulated-pending-exist;

accumulated-pending-exist-->|no|can-trigger-batch{Can Trigger New Batch};

can-trigger-batch-->|yes|trigger-batch[Trigger new batch];

can-trigger-batch-->|no|accumulated-stale-exist;

accumulated-stale-exist-->|yes|trigger-highest-pr[Trigger Jobs on Highest Priority PR];

accumulated-stale-exist-->|no|wait;

end

end

Tide-->pools[Pools - grouped PRs, prow jobs by org/repo/branch];

pullrequest-->pools;

divided-pools-->|filter out prs <br> failed prow jobs <br> pending non prow checks <br> merge conflict <br> invalid merge method|filtered-pools;

head-ref-->divided-pools;

prowjobs-->divided-pools;

config.yaml-->divided-pools;

filtered-pools-->pool-i;

filtered-pools-->pool-n;

filtered-pools-->pool-n1[pool ...];

pool-i-->|report tide status|status-context;

pool-i-->|accumulateBatch|accumulated-batch-prowjobs;

pool-i-->|accumulateSerial|filtered-prowjobs;

classDef plain fill:#ddd,stroke:#fff,stroke-width:4px,color:#000;

classDef k8s fill:#326ce5,stroke:#fff,stroke-width:4px,color:#fff;

classDef github fill:#fff,stroke:#bbb,stroke-width:2px,color:#326ce5;

classDef pools-def fill:#00ffff,stroke:#bbb,stroke-width:2px,color:#326ce5;

classDef decision fill:#ffff00,stroke:#bbb,stroke-width:2px,color:#326ce5;

classDef outcome fill:#00cc66,stroke:#bbb,stroke-width:2px,color:#326ce5;

class prowjobs,config.yaml k8s;

class Tide plain;

class status-context,head-ref,pullrequest github;

class accumulated-batch-success,accumulated-batch-pending,accumulated-success,accumulated-pending,accumulated-stale pools-def;

class accumulated-batch-success-exist,accumulated-batch-pending-exist,accumulated-success-exist,accumulated-pending-exist,accumulated-stale-exist,can-trigger-batch,require-presubmits decision;

class trigger-highest-pr,trigger-batch,merge-single,merge-batch,wait outcome;

3.1.7.1 - Configuring Tide

Configuration of Tide is located under the config/prow/config.yaml file. All configuration for merge behavior and criteria belongs in the tide yaml struct, but it may be necessary to also configure presubmits for Tide to run against PRs (see ‘Configuring Presubmit Jobs’ below).

This document will describe the fields of the tide configuration and how to populate them, but you can also check out the GoDocs for the most up to date configuration specification.

To deploy Tide for your organization or repository, please see how to get started with prow.

General configuration

The following configuration fields are available:

sync_period: The field specifies how often Tide will sync jobs with GitHub. Defaults to 1m.status_update_period: The field specifies how often Tide will update GitHub status contexts. Defaults to the value ofsync_period.queries: List of queries (described below).merge_method: A key/value pair of anorg/repoas the key and merge method to override the default method of merge as value. Valid options aresquash,rebase, andmerge. Defaults tomerge.merge_commit_template: A mapping fromorg/repoororgto a set of Go templates to use when creating the title and body of merge commits. Go templates are evaluated with aPullRequest(seePullRequesttype). This field and map keys are optional.target_urls: A mapping from “*”,, or <org/repo> to the URL for the tide status contexts. The most specific key that matches will be used. pr_status_base_urls: A mapping from “*”,, or <org/repo> to the base URL for the PR status page. If specified, this URL is used to construct a link that will be used for the tide status context. It is mutually exclusive with the target_urlsfield.max_goroutines: The maximum number of goroutines spawned inside the component to handle org/repo:branch pools. Defaults to 20. Needs to be a positive number.blocker_label: The label used to identify issues which block merges to repository branches.squash_label: The label used to ask Tide to use the squash method when merging the labeled PR.rebase_label: The label used to ask Tide to use the rebase method when merging the labeled PR.merge_label: The label used to ask Tide to use the merge method when merging the labeled PR.

Merge Blocker Issues

Tide supports temporary holds on merging into branches via the blocker_label configuration option.

In order to use this option, set the blocker_label configuration option for the Tide deployment.

Then, when blocking merges is required, if an open issue is found with the label it will block merges to

all branches for the repo. In order to scope the branches which are blocked, add a branch:name token

to the issue title. These tokens can be repeated to select multiple branches and the tokens also support

quoting, so branch:"name" will block the name branch just as branch:name would.

Queries

The queries field specifies a list of queries.

Each query corresponds to a set of open PRs as candidates for merging.

It can consist of the following dictionary of fields:

orgs: List of queried organizations.repos: List of queried repositories.excludedRepos: List of ignored repositories.labels: List of labels any given PR must posses.missingLabels: List of labels any given PR must not posses.excludedBranches: List of branches that get excluded when querying therepos.includedBranches: List of branches that get included when querying therepos.author: The author of the PR.reviewApprovedRequired: If set, each PR in the query must have at least one approved GitHub pull request review present for merge. Defaults tofalse.

Under the hood, a query constructed from the fields follows rules described in https://help.github.com/articles/searching-issues-and-pull-requests/. Therefore every query is just a structured definition of a standard GitHub search query which can be used to list mergeable PRs. The field to search token correspondence is based on the following mapping:

orgs->org:kubernetesrepos->repo:kubernetes/test-infralabels->label:lgtmmissingLabels->-label:do-not-mergeexcludedBranches->-base:devincludedBranches->base:masterauthor->author:batmanreviewApprovedRequired->review:approved

Every PR that needs to be rebased or is failing required statuses is filtered from the pool before processing

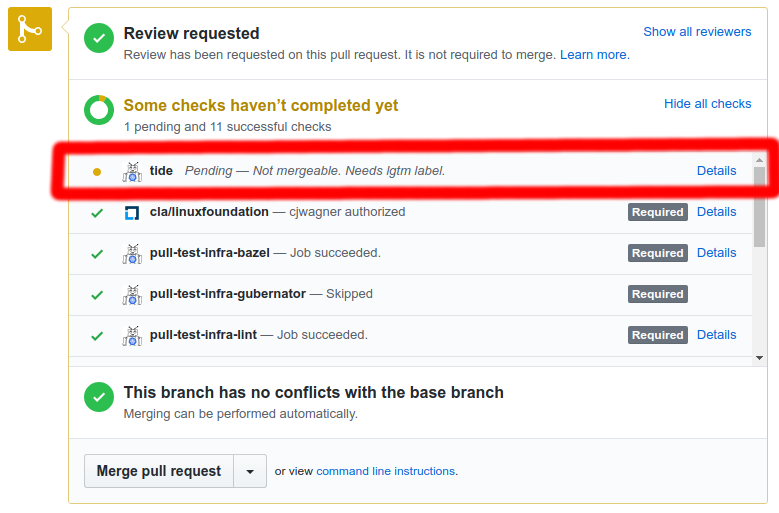

Context Policy Options